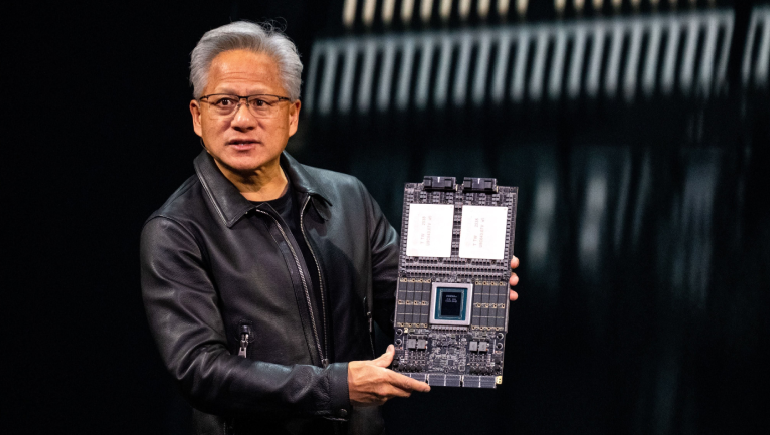

At CES 2026 in Las Vegas, Nvidia introduced its next-generation AI computing architecture, the Vera Rubin platform, signaling a pivotal advancement in infrastructure for large-scale artificial intelligence workloads. The announcement demonstrates Nvidia’s strategy to move beyond traditional GPU-centric acceleration and deliver a rack-scale, co-designed system. Capable of powering future reasoning-based and agentic AI models, while significantly reducing cost and complexity for enterprises and cloud providers.

The Vera Rubin platform integrates six core components into a unified AI compute stack:

This rack-scale design enables Nvidia to deploy multi-GPU systems as “AI supercomputers,” providing enterprise-grade capabilities for demanding workloads.

Rubin GPUs are fabricated on 3 nm process technology, offering multiple-fold improvements in training and inference performance compared to the previous Blackwell generation. Early benchmarks indicate that fewer GPUs are required to achieve equivalent or superior results, reducing both energy consumption and total cost of ownership.

Advanced interconnects like NVLink 6 and optical networking components enable high data throughput, crucial for training large language models and performing extended context computations. These improvements address historical bottlenecks in multi-GPU AI clusters, ensuring smooth scalability.

Several cloud service providers and AI infrastructure partners are set to deploy Vera Rubin systems starting in the second half of 2026. These deployments target workloads in agentic AI, advanced reasoning, and large-scale inference, which require sustained, high-throughput compute resources beyond conventional ML paradigms.

The Vera Rubin launch underscores Nvidia’s leadership in AI infrastructure. By offering an integrated platform encompassing CPUs, GPUs, networking, and DPUs, Nvidia positions itself as the central provider of the next-generation hardware stack for AI innovation. The platform emphasizes performance per dollar, scalability, and flexibility across hybrid cloud and data center environments.

Vera Rubin represents a transformative evolution in Nvidia’s portfolio, designed to support the next generation of AI systems. Its combination of high performance, energy efficiency, and scalable architecture enables enterprises and cloud providers to train and deploy large AI models more effectively. With early adoption already underway, Vera Rubin is poised to influence AI infrastructure design in 2026 and beyond, reinforcing Nvidia’s position at the forefront of the AI revolution.

Comments

There are no comments for this Article.